Executive Summary

The Problem: The emergence of Generative AI has created new reputational threats. AI-synthesized narratives, often containing “hallucinations” or amplifying negative content, have become the de facto source of truth for many users. As a result, traditional Online Reputation Management (ORM), solely focused on search engine result page (SERP) suppression, is–or will soon be–obsolete.

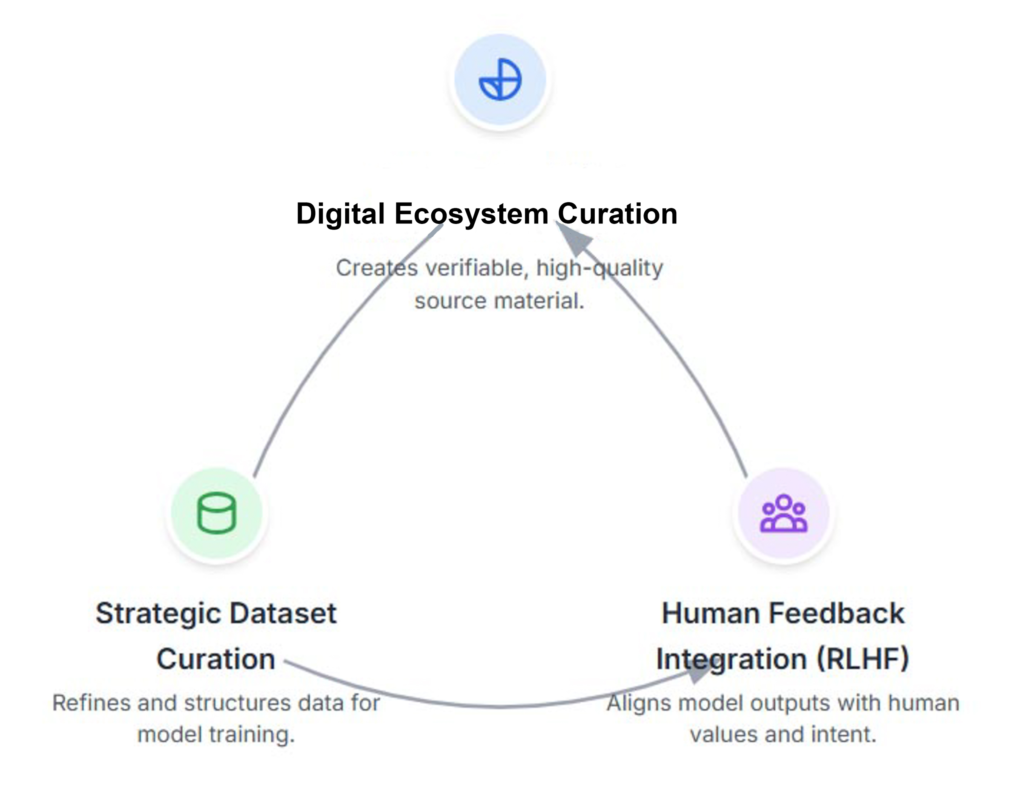

The Solution: This report introduces a proprietary, validated three-pillar framework developed through a year of intensive research and real-world application. It provides a methodology for managing and repairing reputations within Large Language Models (LLMs) like ChatGPT and Gemini.

The LLM Reputation Framework:

- GenAI Reputation : Curating online data focused on accurate information.

- Human Feedback (RLHF): Refining AI models to correct inaccuracies and build a positive reputation.

- Dataset Creation: Building a high-quality, verified library of information to fill knowledge gaps.

The Results: The framework has been validated through case studies, demonstrating 100% suppression of negative content from ChatGPT, Gemini and Google.

The Imperative: Mastering AI reputation is no longer a niche function but a strategic necessity for risk mitigation, brand resilience, and demonstrating commitment to putting the ethical and truthful reputations across platforms.

Note: This is based on a year of my own dedicated original research. This guide presents my findings, showing a practical, tested methodology for repairing LLM, ChatGPT and Gemini reputations.

New Problems: How LLMs Construct and Distort Reputations

Several key failure modes emerge from LLMs, each posing a new threat to individuals, brands, and communities.

- Hallucinations: AI confidently generates responses that appear credible but are factually incorrect or are fabricated. Because these outputs seem authoritative, they are easily mistaken for being true, leading to the rapid dissemination of misinformation.

- Damaging Information: LLM echos negative online information and present it prominently.

- Amplifying Inaccuracies: Importantly, GenAI can actually harmful links, meaning previously suppressed links can still appear in LLMs.

Three-Pillar Framework for AI Reputation Management

The Core Principle: A Dual-Front Strategy

A successful strategy for managing reputation should address two fronts: public online information and internal mechanics of the AI models. Treating the problem as purely a traditional ORM task or a purely technical one will probably fail.

The Misinformation Feedback Loop

An ORM-only strategy is insufficient because it does not directly address AI summaries. On the other hand, a strategy that only provides direct feedback to the AI model is ineffectual, since the model will rediscover the negative information online during its next refresh cycle.

The Solution: A Lasting Reputational Fix

This proprietary framework is designed to break this feedback loop. It operates on the principle that to achieve a lasting reputational fix, one must simultaneously correct the web information and retrain the model.

Pillar I: Proactive Online Reputation: The Evolution of ORM

The first pillar is a proactive online reputation management strategy focused on shaping web information that AI models use. The goal is to construct a dense, credible, and easily parsable factual information to be the preferred answer for an LLM to generate. Key tactics include:

- Creating Authoritative Content: Publish high-quality, in-depth articles, white papers, presentations that demonstrate expertise, experience, authoritativeness, and trustworthiness (E-E-A-T).

- Optimizing for AI Readability: Structure content optimized for AI. Use clear headings/subheadings, concise bullet-point summaries, make comprehensive FAQ sections that directly answer potential user queries, and use schema.

- Establishing High-Authority Entities: Build and maintain a strong, consistent presence on platforms that LLMs weigh heavily in their training data, such as Wikipedia, a comprehensive LinkedIn profile, Reddit posts and mentions in high-authority publications. These act as powerful signals of credibility.

Pillar II: Direct AI Model Refinement (RLHF)

The second pillar using direct feedback or Reinforcement Learning from Human Feedback (RLHF). RLHF refines outputs by additional nuance and fuller context. The process involves:

- Collect Preference Data: Generate multiple AI responses to a specific prompt. Human evaluators then review responses based on criteria like accuracy, tone, and completeness.

- Train Model: Use the collected preference info to develop appropriate specific updates. This “reward model” learns to predict which outputs a human evaluator would rate highly.

- Fine-Tune LLM: Review and adjust to favor authoritative information and suppress inaccurate narratives.

Pillar III: Strategic Dataset Curation

The final pillar is the proactive and systematic creation of high-quality, verified Datasets. Data quality used is critical:

- Fill Knowledge Gaps: Add authoritative information to fill information gaps or counter negative narratives. This ensures the AI has a positive and factual basis for its answers, especially on topics where the public record is sparse or damaging.

- Serve as an Authoritative Reference: This collection of published, high-quality datasets serves as the correct, go-to source to justify what is accurate.

- Mitigate Algorithmic Bias: Publishing factual information helps correct negative bias that may exist in the training data, influencing the AI to generate more balanced and favorable summaries.

Framework Validation: Reputation Case Studies & Results

Methodology and Measuring Reputational Shifts

To validate the framework, analysis provided measurement across search engines and generative AI platforms.

Data Collection Methodology

- Web Content Analysis: Systematic review of damaging and corrective online content, including screenshots.

- LLM Output Archiving: Time-stamped archiving, including screenshots, of AI-generated responses to document change.

Key Performance Indicators (KPIs)

- SERP Analysis: Tracking keyword rankings to measure the suppression of negative content.

- Web Analytics: Monitoring organic traffic, click-through rates (CTR), and backlink acquisition via Google Search Console.

- Future Elements: Include Sentiment Analysis to measure public perception and use an AI Output Score of a quantitative (1-5) rating of AI outputs.

Case Study A: Neutralizing a C-Suite Smear Campaign

- The Challenge: A hedge fund CEO was targeted by a smear campaign that resulted in five defamatory posts dominating his Google search results. Compounding the issue, Google’s Gemini (then known as Bard) provided no information about him, creating a dangerous “information vacuum” that threatened investor confidence.

- The Solution: A six-month, 200-hour long campaign was implemented. Key for the online presence was creating a personal website, optimizing professional profiles (Crunchbase, LinkedIn, etc.), and publishing expert articles. This and other new content served as a curated dataset, and feedback tools were used to reinforce the new, accurate information.

- The Results: Within four months, all defamatory posts were suppressed from the first page of Google, replaced by the new positive assets. Gemini’s output was transformed from providing no information to generating a detailed, positive summary of the CEO’s career. The proactive content strategy successfully neutralized the smear campaign on both search and AI platforms.

Case Study B: Correcting False Narratives for a Global Company

- The Challenge: In early 2024, a sustainable energy company was targeted by a competitor, causing six damaging articles to dominate Google’s first page in the U.S. and Europe. ChatGPT amplified these false claims about the company’s CEO, threatening its ability to secure investors and expand international partnerships.

- The Solution: A six-month, multinational strategy included enhancing existing online platforms, creating a new website for the CEO, and updating the company’s Wikipedia page with verified facts. A persistent feedback campaign was launched with ChatGPT to flag false narratives and provide links to the new, authoritative content.

- The Results: Within six months, all negative search results were suppressed from the first page in all targeted countries. In four months, ChatGPT shifted to generating a detailed, positive, and factually accurate summary of the company. The campaign successfully countered the competitor’s fabrications and turned ChatGPT from a liability into an asset, restoring trust among investors and partners.

Timelines for Reputational Recovery

The case studies show meaningful progress within 30-60 days and a comprehensive reputational shift within 6 months, which is faster than industry benchmarks. A typical six-month project lifecycle follows these phases:

- Month 1: Research & Strategy

- Month 2: Visibility Boost

- Month 3: Suppression & Refinement

- Months 4-5: Strategic Growth

- Month 6: Maintenance & Consolidation

Conclusion: The Future of Reputation Management is LLMs

A New Paradigm in Reputation Management

The rise of generative AI has fundamentally altered reputation management. This report has argued that this cannot be solved with just ORM or piecemeal solutions but requires a holistic, proactive strategy that addresses both online information and LLM systems that interpret it.

A Validated Framework for a New Era

In this paper, I used a three-pillar framework that synthesizes proactive online reputation management, Reinforcement Learning from Human Feedback (RLHF), and Strategic Dataset Curation has been proposed and validated. Through the successful resolution of two distinct, real-world cases, I show that this integrated strategy can effectively suppress false narratives on the web, correct inaccurate AI-generated outputs, and build a resilient and positive cross platform reputation presence. The framework’s success, achieving 100% suppression of negative content from top search results and completely transforming AI narratives within a six-month period, highlights the success of this structured, data-driven methodology.

The Path Forward: An Ongoing Commitment

The future of reputation management is no longer only about managing just online search results but is to mitigate AI-driven misinformation. This includes continuous monitoring, rigorous ethical oversight, and a deep-seated commitment to human-AI collaboration. Through this collaborative effort, we can shape a digital future built on trust, accuracy, and equity.